August 2025: Vancouver AI Commnity Meetup Recap: VanAI #20

https://photos.app.goo.gl/2zu5ht28BHvr3fYQ8

Vancouver AI Meetup #20 – Indigenous leadership and philosophical framework

Speaker: Carol Ann Hilton, CEO Indigenous Institute

Event: Vancouver AI Community Meetup #20 – BC AI Ecosystem Association Launch

Date: August 2025

Context: Board member introduction & ceremonial community opening

Significance: Establishing Indigenous philosophical framework for nonprofit governance

🎭 The Moment & Setting

Carol Ann stepped to the mic after Gabriel George’s Eagle song ceremony, carrying forward the sacred space set through traditional opening. This was the official launch of the BC AI Ecosystem Association as a nonprofit, coinciding with her board membership announcement.

The Question: “How are you showing up tonight?”

— Not small talk, but a guiding inquiry from Indigenous Genomics Institute, now brought into AI community governance.

🌊 “What Are We Responding To?” – Core Framework

Deeper Inquiry

- What has been brought together?

- What are we responding to?

- Why are we here?

Community Relationship Building

“When we do that and we can be real with what that answer is, then we can relate to each other and we can bring the best out of humanity itself.”

Not abstract—practical guidance for how 250+ diverse participants connect beyond surface networking.

🌀 Chaos as Creative Potential

Personal AI Engagement Story

“I was fighting with my ChatGPT the other night… I took it right out to the edge of meaning.”

AI framed as a philosophical partner, not just a productivity tool.

The Chinese Chaos Symbol Reframe

Chaos = creative potential. AI transformation is opportunity for conscious creation, not fear.

Application: Community as an active response to chaos, not passive consumers of tech.

🏛️ Decolonizing Prescribed Meaning

Revolutionary Framework

“As much as meaning has been prescribed to us and each one of our heritage and legacies… there was a prescription of meaning applied onto our humanity.”

Key takeaways:

- Colonial systems imposed meanings/limits on all peoples

- AI offers chance to rewrite meaning aligned with real values

- Shared experience of prescribed limits → shared liberation potential

- Tech adoption as liberation vs. further colonization

Freedom Through Consciousness

Understanding imposed prescriptions → making conscious choices → creating new freedoms.

🚣 “In This Canoe Together” – Partnership Philosophy

Board Membership Significance

Carol Ann’s board role = commitment to embedding Indigenous values in governance.

Relationship with Kris Krüg

Public recognition of friendship & collaboration:

- Kris = CTO of Indigenous Genomics Institute

- Co-built indigenomics.ai platform

- Shared values: tech for community flourishing

Family & Intergenerational Recognition

Direct acknowledgment of Kris’s parents present:

“Where’s Mama Krug… did this kid come out that cool?”

— Rooted leadership in family/ancestral context.

🌟 Impact on Community Direction

Ceremonial Practice as Governance

Indigenous ceremony not tokenistic → structural requirement for all operations.

Philosophical Depth Integration

Kept the community from being “just tech networking.” Brought consciousness, meaning, and purpose to the center.

Values-Driven Tech Development

Indigenous worldviews as methodological upgrades, not decoration.

Economic Justice Integration

Connected AI community to decolonization & justice movements—AI as a tool for liberation.

💫 Lasting Influence on BC AI Ecosystem

Carol Ann’s opening speech established her as philosophical anchor. It ensured BC AI Ecosystem Association will:

- ✅ Maintain ceremonial practice as governance structure

- ✅ Ground tech in traditional wisdom

- ✅ Prioritize relationships over networking

- ✅ Continuously question meaning & purpose

- ✅ Connect AI to economic justice & decolonization

In 5 minutes, she laid the philosophical foundation for everything that followed—ensuring Indigenous wisdom guides, not just decorates, the community’s technological journey.

Wisdom Slurp

SUMMARY

- Krug hosted Vancouver AI Community Meetup #20 and BCAI nonprofit launch featuring Jos Duncan’s keynote, Peter Bitner on upskilling, ceremony, and Rival hackathon showcases.

IDEAS:

- Open gatherings grounded in ceremony align community energy and values before exploring technology’s possibilities and risks.

- Love as an organizing principle for AI policy reframes advocacy toward dignity, equity, abundance, and joy.

- Personal AI ethics policies clarify boundaries, align behavior with values, anticipate tempting gray areas before decisions.

- Transparent disclosure of AI use rebuilds trust in media products and storytelling without diminishing creative experimentation.

- Indigenous teachings foresee a planetary web; honor connection, integrity, and responsibility when building computational webs together.

- Ceremony and song cultivate attention, gratitude, and humility, anchoring technical work in place-based relationships and history.

- Hackathons catalyze data storytelling inventions that democratize insight through interactive maps, voices, games, and music experiences.

- Vibe coding shifts focus from low-level implementation to user experience, narrative, and meaningful feature design priorities.

- Interactive, AI-guided datasets become choose-your-own adventures, surfacing patterns, explanations, next steps, and synthesized takeaways for decision-makers.

- Music generated from survey responses translates statistics into empathy, strengthening communal understanding and emotional resonance together.

- Open-source versus proprietary debates demand nuanced advocacy that punches up, not down, while protecting artists’ livelihoods.

- Blockchain-based provenance could enable attribution, licensing, and compensation without crushing open innovation or research access ecosystems.

- Upskilling is the bottleneck; organizational change, safety to experiment, and clear frameworks beat new models alone.

- AI literacy blends hard skills, human judgment, and domain expertise; people should run loops, not machines.

- We overestimate short-term impact and underestimate long-term transformation; culture lags technology’s capabilities by years and institutions.

- Kids need safe spaces to explore AI critically, together, without shame, with snacks and mentors present.

- Human soft skills—ethics, empathy, negotiation, creativity—become differentiators amplified by AI, not replaced by it in practice.

- Transparency about data limitations prevents overreach; disclaimers contextualize findings and protect responsible storytelling within public conversations.

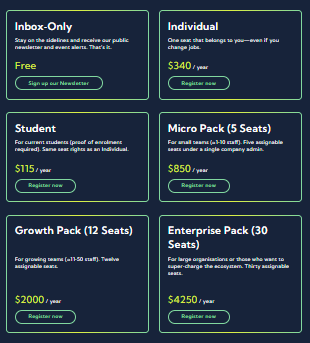

- Community-run associations can aggregate perks, funding, training, and political voice to accelerate equitable AI adoption province-wide.

- Generative audio gains soul when grounded in real community narratives, not generic prompts or aesthetics alone.

- Organize digital protests and campaigns to demand accountability from AI firms while uplifting beneficial projects locally.

- Parents, elders, and youth co-presence strengthens intergenerational AI literacy and keeps gatherings compassionate and resilient together.

- Gamified exploration and achievements encourage deeper data engagement and learning without intimidation or gatekeeping for newcomers.

- Ethical tensions surface in negotiation; self-awareness about pragmatism versus purity shapes personal AI choices and boundaries.

- Local ecosystems thrive when creative, academic, nonprofit, and industry actors collaborate on experiments and shared infrastructure.

- Ceremonial land acknowledgments connect technological futures to histories of dispossession, resilience, and living languages and responsibilities.

- AI’s environmental externalities require organizing users, workers, and communities to press for mitigations and accountability measures.

- Community data can fund art, influence policy, and teach skills when openly shared and playfully remixed.

INSIGHTS

- Ceremony isn’t decoration; it’s governance that orients technical work around place, accountability, and collective intention formation.

- Transparency converts skepticism into legitimacy; hiding AI use erodes trust faster than imperfect implementations do everywhere.

- Ethical clarity emerges before convenience; personal policies prevent rationalizations when stakes, incentives, and temptations appear suddenly.

- Open-source survives by punching upward and innovating provenance, not scapegoating community tools under corporate narratives alone.

- Upskilling beats model-chasing; culture, workflows, and incentives determine realized value more than raw capability over time.

- Humanity’s comparative advantage becomes judgment, care, and meaning-making amplified by machines, not speed alone or volume.

- Data storytelling builds power when recipients participate, question, and navigate, rather than passively consuming dashboards alone.

- Community legitimacy grows from intergenerational presence and service, not hype cycles or model version announcements alone.

- Emotional resonance is computable but remains human-authored; context-rich inputs yield authentic outputs audiences feel as truth.

- Provincial associations transform scattered experiments into bargaining power, resource circulation, and statewide learning flywheels for communities.

- Teaching critical thinking now includes adversarial testing of AI outputs, not just citations and logic exercises.

- Ritualized reflection—love, grief, gratitude—protects against cynicism while sustaining long-haul, justice-centered technology work across communities and generations.

- The best tools compress implementation time, expanding strategic thinking, experimentation speed, and storytelling ambition for builders.

- Organized users can reshape AI externalities faster than regulators alone by coordinating demands and publicity campaigns.

- Local datasets are civic assets; governance determines whether insights benefit communities or extractive platforms and investors.

QUOTES:

- “How are you showing up?” — Carol Ann Hilton

- “What are we responding to” — Carol Ann Hilton

- “I lost a loved one last week” — Jos Duncan

- “When was the last time you witnessed an act of love?” — Jos Duncan

- “When was the first time you witnessed an act of love?” — Jos Duncan

- “we’re, we’re gonna talk about love and ai.” — Jos Duncan

- “I decided to create an AI ethics policy.” — Jos Duncan

- “We need spaces like this to have these kinds of conversations.” — Jos Duncan

- “I’m actually trying to build a future I wanna live in.” — Chris Krug

- “What does that feel like to put love in the code?” — Jos Duncan

- “one day AI will be smarter than us” — Jos Duncan

- “are you ready to stop reading about the data and start experiencing it?” — Sev Raskin

- “Dystopian, fake, that’s the vibe.” — Projwal’s cluster voiceover

- “it’s a believable mirror that lies,” — Projwal’s cluster voiceover

- “hey, tonight what the theme was, eat life short, eat the dessert first.” — Chris Krug

- “Can you just help our workers feel a little less scared of ai?” — Peter Bitner

- “the future is here, is just unevenly distributed.” — Peter Bitner

- “we tend to overestimate the effect of tech in the short run and underestimate the effect in the long run.” — Peter Bitner

- “Is, is AI the same as chat GPT?” — Debbie Krug

- “healthy connection is important.” — Gabriel George

- “our world would be connected by a web,” — Gabriel George

- “kids… feel like it’s cheating in school” — Antonia (Ethos Lab)

- “we reinvent the course every quarter.” — Peter Bitner

- “transforming data into human connection.” — Dean Shev

- “the age of reports and static dashboards is over.” — Sev Raskin

HABITS

- Begin gatherings with ceremony to center attention, gratitude, place, and shared values before technical content delivery.

- Open meetings by asking participants, ‘How are you showing up?’ to invite presence and honesty upfront.

- Disclose AI assistance explicitly in media, proposals, and social posts to maintain audience trust and accountability.

- Publish a mission-aligned AI ethics policy and update it publicly as practices evolve and feedback surfaces.

- Draft a personal AI ethics policy defining shortcuts, gray zones, and bright lines before temptation arises.

- Practice breath, reflection, and values check-ins when emotionally charged topics emerge during technical debates with colleagues.

- Prototype quickly, iterate relentlessly, and test with users; ship improvements in small, frequent cycles for learning.

- Spend more time designing meaningful features and narratives than coding boilerplate; let tools compress implementation overhead.

- Gamify data exploration with achievements to encourage curiosity, momentum, and deeper engagement without fear of failure.

- Include disclaimers about sampling, weighting, and limits whenever presenting survey insights to nontechnical audiences for context.

- Create welcoming intergenerational spaces; invite elders, parents, and youth to participate and lead during community events.

- Schedule monthly meetups and sustain them; consistency compounds relationships, learning, and shared memory across the ecosystem.

- Organize hackathons with open-ended briefs, cash prizes, and datasets to surface unexpected community talent and collaborations.

- Teach AI literacy by combining hands-on practice, case studies, and honest discussions about risks and ethics.

- Reinvent courses quarterly to stay current; collect feedback and co-design curriculum with practitioners across multiple domains.

- Use adversarial prompts and deceptive outputs to train teams’ critical thinking and verification reflexes under pressure.

- Handle logistics respectfully: clean venues, support staff, and leave spaces better than you found every time.

- Offer snacks, music, and fun challenges to make learning welcoming, social, and memorable for all participants.

- Stack member perks—training discounts, coworking time, venue access—to sustain participation and nonprofit finances over the year.

- Document everything: transcripts, datasets, repositories, videos, and dashboards; publish them for remixing and attribution by members.

- Invite constructive pushback on slides and claims; integrate corrections quickly and credit contributors for improved accuracy.

FACTS:

- Vancouver AI Community Meetup #20 coincided with the launch of the BCAI Ecosystem Industry Association nonprofit.

- Keynote speaker Jos Duncan traveled from Philadelphia; she leads Love Now Media and media-centered AI advocacy.

- Indigenous opening featured Gabriel George acknowledging ancestors, sovereignty, language loss, and web-like cosmology connecting people today.

- Carol Ann Hilton of Indigenomics asked, ‘What are we responding to?’ and emphasized meaning-making amid chaos.

- Rival Technologies ran a three-round data storytelling hackathon; $5,000 awarded previously, another $2,500 awarded tonight too.

- Projwal visualized long responses with embeddings and clusters, then staged roundtable dialogues among cluster representatives voices.

- Sev Raskin’s interface generated dynamic narratives, charts, and next steps from 1,001 British Columbia survey responses.

- Sev’s demo highlighted 572 working professionals (57.1%) and 341 community leaders (34.1%) among respondents in data.

- Young tech enthusiasts scored highest AI sentiment at 0.82; creative professionals showed 0.73 sentiment in comparison.

- Occasional AI users outnumbered regular users by 2.5x: 405 occasional versus 164 regular, per interface output.

- Urban versus rural AI adoption differed by 2.6% in Sev’s exploratory analysis example within presented story.

- Dean Shev composed seventeen songs from survey data and community transcripts, releasing a downloadable concept album.

- Peter Bitner emphasized upskilling, organizational change, and AI literacy over chasing incremental model performance improvements alone.

- Clients routinely ask trainers to help workers feel less scared of AI adoption at work initially.

- A community-built funding directory and ecosystem map track approximately 480 AI-related organizations across British Columbia today.

- The venue celebrated one year in the building and confirmed a second term for future events.

- Ethos Lab announced a September 11 education meetup and weekly high-school AI experimentation program in Vancouver.

- Surrey AI hosts monthly gatherings with interactive ‘Is it AI or Not’ challenges for participants locally.

- Audience debates covered Stable Diffusion, compensation for training data, and risks of strengthening proprietary dominance ecosystems.

- Replica chatbot anecdotes illustrated perceived empathy and conversational awareness in consumer AI applications reported since 2020.

- The team reinvents course curricula quarterly, customizing use cases by profession and continuously refreshing materials collaboratively.

- Members received stacked perks like training discounts, coworking access, and venue savings through the new association.

REFERENCES

- Love Now Media (media organization; AI ethics policy and advocacy)

- Google News Initiative — Blue Engine Collaborative program

- Google accelerators (founder participation and coaching)

- Upgrade.ai (training and upskilling programs)

- Indigenomics Institute (Carol Ann Hilton)

- Ethos Lab (youth AI experimentation; education meetup)

- Rival Technologies / Reach3 (market research, survey datasets)

- Angus Reed/Angus Reid Forum (panel providing respondents)

- Stable Diffusion (open-source image model)

- Midjourney (image generation tool)

- DALL·E (image generation tool)

- New York Times lawsuit against OpenAI (training data compensation debate)

- Blockchain/tokenization for provenance and attribution (podcast and media examples)

- Claude (Anthropic model referenced during co-creation)

- Replica (conversational AI app anecdote)

- Mustapha Suleyman (AI thought leader referenced)

- IMF and McKinsey projections (macro impacts of AI)

- UC Berkeley future-of-storytelling/drone cinematography course reference

- H.R. MacMillan Space Centre / planetarium (venue)

- Bear x Bunny team (Sev Raskin and April AI)

- Surrey AI meetup (“Is it AI or Not” challenge)

- Nook coworking (member perk)

- Meta Creation Lab in Surrey (creative AI lab)

- SIGGRAPH (conference; local cross-zone meetup)

- Northeastern University (SIGGRAPH adjacent meetup venue)

- Z Space (event venue referenced in SIGGRAPH recap)

- Creative (no-code game creation platform demoed by Ahmed)

- Nuke (compositing software used for generative video)

- Prince of Persia (1989; inspiration for game-making journey)

- Vancouver Film School (VFS) affiliation mentioned

- “Energy calculator for AI” by Lionel Ring (community project)

ONE-SENTENCE TAKEAWAY

- Build an AI future grounded in ceremony, love, literacy, and community power, not hype alone.

RECOMMENDATIONS

- Open every AI gathering with ceremony and intentions to align values before technical demos and debate.

- Publish a transparent AI ethics policy, disclose tool usage, and invite feedback from affected communities continually.

- Write a personal AI ethics policy clarifying boundaries, acceptable shortcuts, and attribution practices for creative work.

- Prototype interactive, AI-guided dashboards that narrate findings, suggest next steps, and allow exploratory questioning by stakeholders.

- Gamify civic datasets with achievements to boost engagement, learning depth, and equitable participation across backgrounds consistently.

- Organize digital advocacy campaigns targeting harmful practices while celebrating organizations modeling responsible, community-centered AI every month.

- Invest in AI literacy programs combining hands-on practice, ethics discussions, adversarial testing, and domain-specific applications locally.

- Create intergenerational learning spaces, inviting elders and youth to co-lead workshops and co-design curricula with snacks.

- Support open-source ecosystems while developing provenance standards enabling attribution and compensation without crushing research or experimentation.

- Integrate consentful data practices and disclaimers when sharing survey insights to avoid overgeneralization and misuse publicly.

- Launch monthly hackathons pairing new datasets with artists, developers, and civic partners to prototype community tools.

- Shift effort from boilerplate coding to feature design; use AI to accelerate scaffolding and testing cycles.

- Teach managers to evaluate AI outputs critically with structured rubrics, verification steps, and failure-case libraries internally.

- Stack member perks—training discounts, space access, grants—to incentivize participation and sustain association operations throughout the year.

- Commission community albums, zines, and exhibits translating datasets into stories people remember and share with pride.

- Encourage pushback during presentations; correct slides quickly and credit contributors for improvements, enhancing rigor and trust.

- Partner with schools to replace blanket bans with guided AI practice, reflection journals, and ethical scenarios.

- Develop youth fellowships to build local datasets, document elders’ stories, and prototype community tools each semester.

- Align funding with community priorities by publishing roadmaps, budgets, and measurable outcomes for accountability every quarter.

- Adopt a ‘humans run loops’ principle keeping judgment, oversight, and escalation paths firmly human-controlled across systems.

- Publish open templates for AI policies, consent notices, dataset cards, and advocacy letters for easy reuse.

- Celebrate contributors publicly with profiles, playlists, and showcases to sustain momentum and attract collaborators over time.

Opening the Circle

You don’t walk into a Vancouver AI meetup like you’re checking into a hotel ballroom. You step into a living organism. A hundred different storylines colliding under the planetarium dome… founders, high school hackers, elders, journalists, artists, scientists, and my mom and dad, who braved the border crossing just to see what the hell I’ve been building up here.

I kicked things off with the basics: it’s our 20th meetup. Twenty months straight, we’ve gathered to mix art, tech, ethics, and beer. Tonight was special… we weren’t just meeting, we were founding. The official launch of the BC + AI Ecosystem Association nonprofit.

But before bylaws and board seats, we started where we always should: in ceremony.

Indigenous Welcome: Webs and Prophecies

Gabriel George (Tsleil-Waututh Nation) stood up first. No slides. No jargon. Just lineage. He reminded us that his great-great grandmother lived in this very village before her family was forcibly relocated. He reminded us that surnames themselves are a colonial hack — his people understood identity not as a line but as a web.

And then he dropped the line that rewired the room:

“Our people saw it coming. They said one day our world would be connected by a web, and through that we would be able to speak to each other. These were prophecies preached before colonization.”

Think about that. The internet, AI, the whole lattice of connectivity — not invented by Silicon Valley, but anticipated by Indigenous knowledge systems. The elders already called it.

Gabriel didn’t just bless the space, he warned us: “When we do this work in AI, it’s good to have integrity, to have values. What is the base that you’re operating from?”

Then he sang the Eagle Song — gifted to his father as a boy — a reminder that every eagle carries our prayers to the ancestors. His voice filled the dome. It wasn’t a performance. It was anchoring.

The night started not with hype, but with history.

Carol Ann Hilton: Chaos and Creation

Next came Carol Ann Hilton, founder of the Indigenomics Institute and one of our new nonprofit’s board members. She didn’t waste time on pleasantries.

“What are we responding to?” she asked. Not “what are we building,” but what forces are pressing against us, calling us to act.

She looked straight at the mess of our world — late capitalism, environmental collapse, algorithmic harms — and said: “It’s a little fucked up out there right now… but when we experience chaos, create.”

This wasn’t motivational-poster talk. It was an invocation. Chaos is not a pause button. It’s the raw material.

She pushed us further: meaning itself has been prescribed onto our lives — colonial scripts, economic scripts, tech utopia scripts. The work is to break that prescription and write new meanings.

And her methodology?

Love. “What does it feel like to put love in the code? Love in the land? Love in the culture?”

Not poetry. Protocol.

Jos Duncan: Love + AI + Grief

Then came Jos Duncan-Asé, founder of Love Now Media in Philadelphia and my co-lead at a Google AI lab for journalists. She flew across the continent to stand with us… even though she’d just lost a loved one and had to leave the right after the event to return home for a funeral.

https://www.youtube.com/watch?v=noI6xFJ13sM

She opened by asking us to close our eyes: “When was the last time you witnessed an act of love?” Then she asked us to remember the first act of love we ever saw. The room held its breath. You could feel the dome shift.

Jos deals in love… that’s her medium. She tells stories of Black, Indigenous, and brown communities through a lens of joy, abundance, and justice. So when she started experimenting with MidJourney and ChatGPT in 2022, she thought she was filling a gap connecting the “tech working class” with the “tactile working class.”

But her community didn’t celebrate. They felt betrayed. “How could you, of all people, use AI to make images of children? To generate social media calendars?”

Her answer was to write an AI ethics policy. Not a corporate one. A community one. She spelled out exactly how she was using AI (proposals, calendars), how she wasn’t (reporting), and why it mattered (to keep Black communities from being left behind again).

“Transparency really helped people feel safe.”

Then she told us about a negotiations class, where she learned the difference between purists, pragmatists, and opportunists. She realized she was a pragmatist. Sometimes transparent, sometimes holding cards back. Her lesson? Everyone needs a personal AI ethics policy. Because when you’re faced with the choice to profit off someone else’s work, you need to know your boundaries.

But Jos didn’t stop at the personal. She zoomed out to corporate power: billion-dollar companies strip-mining IP, burning rivers dry with GPU water cooling, exploiting labor while talking “innovation.” She compared it to recycling in Philly: citizens fined for not sorting trash, while the city quietly shipped it all to Chester to be burned in an incinerator. Users carry the burden while corporations pollute with impunity.

Her call: AI advocacy as collective action.

- Educate people.

- Make demands that benefit humanity.

- Call out harm and demand correction.

- Uplift those doing it right.

She shouted out folks in the room… Lionel Ringenbach with his AI energy calculator, Anthonia and Ethos Lab teaching youth to worldbuild responsibly.

And she closed with a line that’s already become lore in our circle:

“One day AI will be smarter than us. And when that time comes, I want it to love us enough to save us.”

Peter Bittner: The Future of Work

From the heart to the gut. Peter Bittner, my co-founder at Upgrade.ai, brought the hard truths on jobs, upskilling, and what’s coming.

He reminded us of Roy Amara’s law: we overestimate tech in the short run, underestimate it in the long run. GPT-5 wasn’t the leap people wanted. That’s fine. The shift is already here.

“The bottleneck isn’t GPUs. It’s people.”

He called out companies who pay consultants millions, then whisper: “Just make our workers feel a little less scared of AI.”

Peter named AI literacy as the new computer literacy. If you can’t speak it, you’re out. He broke it into three parts:

- Hard skills (prompting, automation, coding basics)

- Human skills (critical thinking, judgment, creativity)

- Domain expertise (your story, your craft, your context)

That last piece — domain expertise — is what no model can steal. “Your lived expertise is training data too. Don’t forget it.”

And he wasn’t rosy about education. Universities are failing. Kids graduate with debt and no AI fluency, while others who self-taught land jobs that didn’t exist two years ago.

His warning: if we don’t tackle upskilling now, we face not a tech crisis but a human literacy crisis. Vulnerable workers will be left behind not by machines, but by our failure to prepare.

But he reminded us: upskilling can be fun. “If your AI training feels like death by slideshow, you’re doing it wrong.”

Hackathon: Data Becomes Story

Our hackathon with Rival Technologies has become its own mythos. We drop survey data from British Columbians into the community and let builders remix it. This round? Absolutely unhinged in the best way.

- Prajwal, a kid who just graduated high school, built a semantic map of responses that clusters into voices and then stages a roundtable dialogue between the clusters. Imagine the data itself debating: one voice saying “AI art is garbage,” another countering “It depends on prompts.” The kicker? “The project only took two days to code. Most of my time was spent thinking about the idea.”

- Sev (Bear x Bunny team) rolled out a choose-your-own-adventure data interface. You don’t just read charts. You converse with the dataset, unlock achievements, and wander its jungle guided by an AI sidekick. He pushed Claude AI so hard it literally refused, saying it was “too busy” to process emotion. Sev’s verdict: “The age of static dashboards is over.” We inducted him as the first member of the Hackathon Hall of Fame.

- Dean Shev stole the show. By day, he’s a healthcare data analyst. By night, a musician denied his dream. For this hackathon, he turned our survey data into a 17-track AI-generated album. Each song represented someone in the community — Carol Ann, Gabriel, myself, and others. It wasn’t gimmick. It was soul. “Music is the universal language of connection.” The crowd knew it. He walked out with the $2,500 check and a new legend attached to his name.

The Big Reveal: BC + AI Ecosystem Association

Finally, the reason we gathered: to name and claim what we’ve been building.

Twenty meetups. Thousands of WhatsApp messages. Hackathons, courses, subgroups, spin-offs. Until now it’s been duct tape and vibes. But last week we registered the BC AI Ecosystem Association nonprofit society.

It’s a container. A canoe. A way to hold all this wild energy without losing it.

Already we’ve got:

- Subgroups: Surrey AI, Squamish AI, Mind+AI+Consciousness, AI in Education, Applied AI in Industry.

- Resources: a funding directory (already helping members land grants), three rounds of public opinion datasets from Angus Reid, an ecosystem map of 480 BC AI orgs, and a ChatGPT-style assistant trained on all our community transcripts.

- Membership: 34 humans signed up on Day 1. That’s 34 voices we can bring to government, funders, media, and each other.

Critics say we’re just “creatives making pretty pictures.” Wrong. We’re wrestling with ethics, business models, governance, and cultural futures. This is not the AI Network of BC 2.0. This is DIY, grassroots, open-source, and future-facing.

Community Announcements

The launch wasn’t the end. It was a beginning. We closed the night with signals of what’s coming:

- Ethos Lab (Antonia + PJ): AI + Education meetup on Sept 11. Youth program launching Sept 19 for high school students.

- Surrey AI (Riv + Matthew): Living-room AI salons. Next on Sept 16. Discount code VANAI for folks from the city.

- SIGGRAPH crew (Kevin + friends): Showcased wild experiments — fake home-shopping channels, no-code game engines, procedural worlds. Film + AI subgroup forming next month.

Closing the Circle

The bar stayed open, the gulab jamun flowed (Ganesha’s birthday!), and we celebrated one year of hosting in the Space Centre. We signed for year two.

We hugged my mom. We toasted the future. And we cleaned up our mess, because that’s how community survives.

Key Takeaways

- AI is not just technology. It’s prophecy, culture, and values embodied.

- Transparency is survival. Communities need ethics policies as much as corporations.

- Upskilling is urgent — but it can be fun, joyful, and shared.

- Data doesn’t just inform. It can sing, argue, and tell stories.

- Community > corporations. Always.

- BC isn’t trying to be Silicon Valley. We’re building something distinctly ours: rooted, creative, and regenerative.

What’s Next

- Sept 11: AI + Education @ Ethos Lab

- Sept 16: Surrey AI Meetup

- Sept 24: Vancouver AI Meetup #21

- Dec 13: BC AI Awards at the Space Centre

We’ve been building this ecosystem on vibes, duct tape, and stubborn love. Now it has a nonprofit body. A voice. A map. A future.

And like Jos said, one day AI may be smarter than us. When that happens, I want it to remember nights like this. To remember that we trained it with love.