BC + AI’s Ethics Community Responds to Canada’s National AI Task Force Consultation

How the AI Ethical Futures Lab is giving voice to the communities left out of Canada’s AI strategy

On a Thursday evening in late March, a diverse group gathered at Aperture Coffee in Vancouver’s Chinatown. Artists, technologists, educators, philosophers, and concerned citizens, all drawn together by a shared conviction: the conversation about Canada’s AI future shouldn’t be dominated by industry voices alone.

This was the AI Ethical Futures Lab‘s contribution to the People’s AI Consultation, a civil society response to what 160+ organizations called a “mad rush to a largely predetermined conclusion.”

The Problem: A Consultation That Missed the Point

In October 2025, the Canadian government announced a 30-day “national sprint” on AI strategy. The task force was industry-dominated. The survey questions contained embedded assumptions. And when 11,000+ submissions arrived, the government analyzed them using four commercial LLMs, a detail documented in the official summary of inputs, with no transparency on methodology, prompts, or data handling.

The result? A report containing “conflicting recommendations reflecting sharply different visions” with no clear implementation priorities.

As legal scholar Teresa Scassa put it: this was a predetermined conclusion dressed up as public engagement.

Enter the AI Ethical Futures Lab

The AEFL is part of the BC + AI Ecosystem, a grassroots community building human-centered AI spaces across British Columbia. Unlike conferences dominated by vendor pitches, AEFL creates space for genuine dialogue about where we want AI to take us.

“This is one of those little things that fruits on the tree that have blossomed here,” explained one organizer. “it’s not a fancy production. It’s a dialogue-driven exploration.”

The lab had already been tracking Canada’s AI policy moves, writing open letters, and demanding a seat at the table. The People’s AI Consultation gave them a framework to channel community voices upward.

What Emerged: Five Themes from the Room

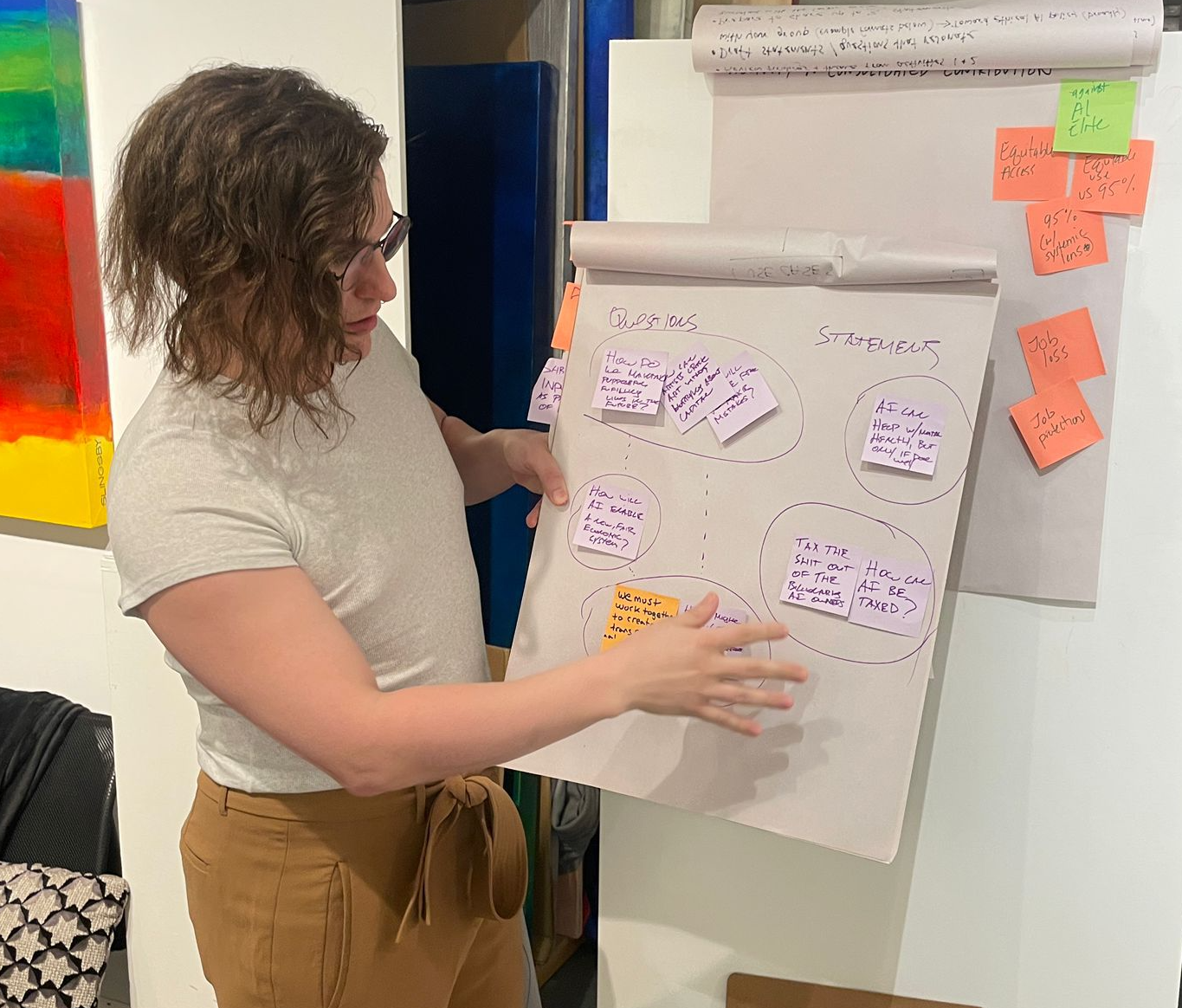

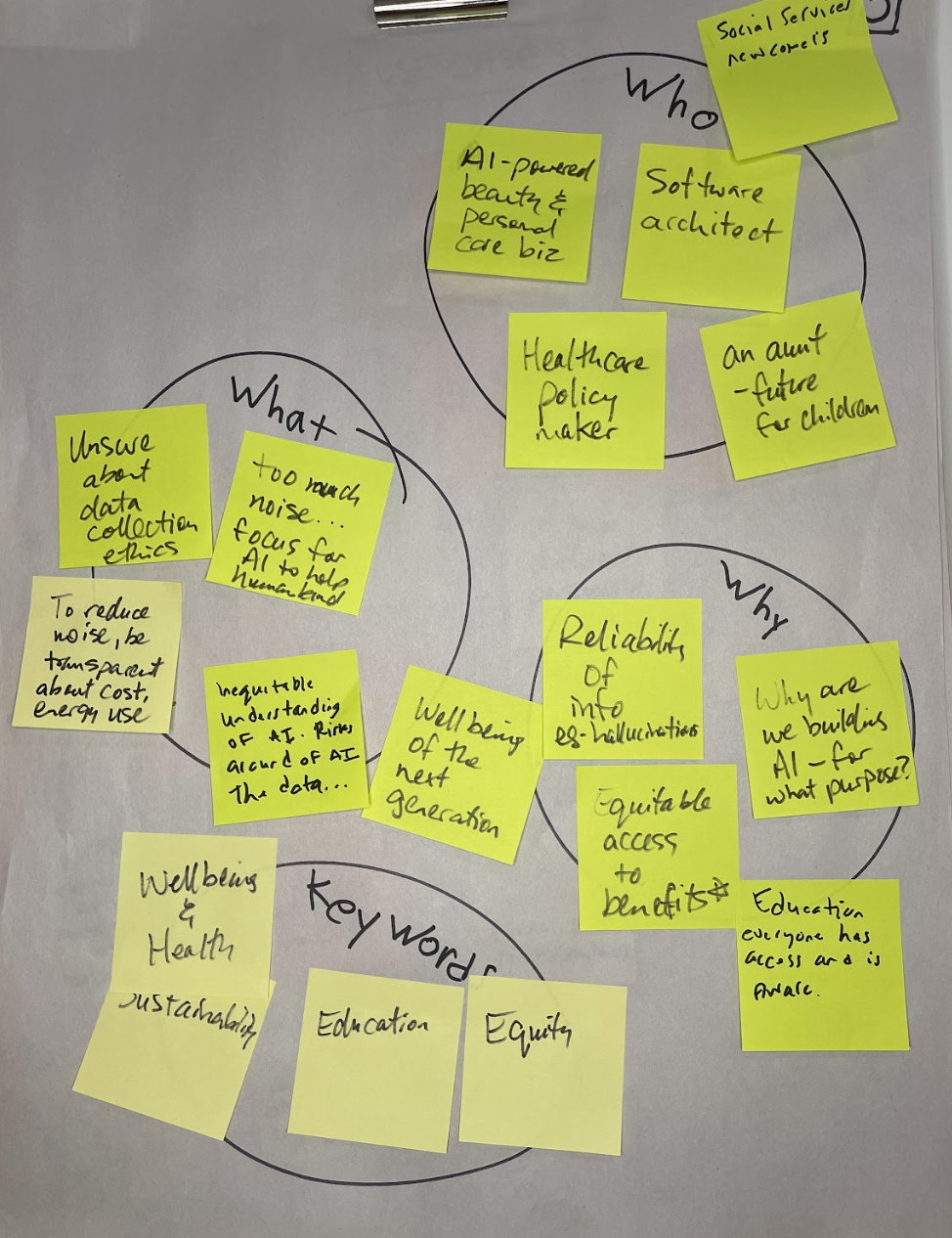

Jesi Carson, a design researcher with 15 years of experience in participatory democracy, facilitated the evening. Groups of five or six worked through three activities: sharing lived experiences, clustering concerns, and crafting statements.

1. The 95% Problem

“We don’t want an AI elite just because you’re more advanced in your AI usage,” said one group. “We don’t want that 5%. We want the 95% to come along.”

But another participant pushed back: “When you say 95%, are you devaluing human activity? What happened to my ability to generate something original?”

The tension between AI augmentation and human dignity ran through the entire evening.

2. Education as Kindness

“If you don’t equip people with ways to use these tools properly, people can get fired, people can make career-ending mistakes. Educating them. Education is kindness.”

Groups emphasized that “curious minds pop up in all sorts of unexpected places.” In small-town high schools, in marginalized communities, in unexpected corners.

The question isn’t whether AI will reach them, but whether they’ll have the resources to use it well.

3. Building a Bigger Boat

One group landed on a striking metaphor: “How do we build a bigger boat?”

The idea: instead of fighting over shrinking resources, use AI as a bridge between different value systems. “We can find a way where we can all communicate and build long-term relationships with people even on the other side of the world. Augmenting our meatspace relationships.”

4. The Generation Gap

A provocative observation: “We are the only generation in history that developed socially and emotionally without AI. And now it has to be introduced to us. Whatever works for us might not work for kids going to kindergarten right now.”

And then the kicker: “AI art is already called Boomer Art. My Gen Z friends, when they see an ad with AI-generated art, they’re like, ‘fuck that place.'”

Youth resistance to AI may be the counterbalancing force nobody is accounting for.

5. Canada’s Sovereignty Question

“We do not agree with giving up or compromising sovereignty of our countries to AI. Canada has to make its own foundational AI product and be a leader.”

The room was clear: this isn’t just about ethics in the abstract. It’s about who controls the infrastructure of thought.

The Hard Question: What Do We Submit?

As the session wound down, tension emerged. Should the group submit a collective statement to the People’s AI Consultation?

“This is way too soon. We can’t do this!”

“If we don’t get our voice to decision-makers now, we don’t have a voice.”

“I’m also of the option to submit our own as well.”

The facilitator wisely let it breathe: “We may not have consensus on the messages we’re trying to say.”

That’s the point. Real consultation includes diverse perspectives, not forced consensus. The evening ended with more questions than answers.

That’s exactly what a genuine exploration should produce.

Why This Matters Beyond the Room

The AEFL People’s Consultation wasn’t just about Canada’s AI strategy. It was a proof of concept for a different kind of engagement:

- No artificial deadline. The People’s AI Consultation remains open, unlike the government’s 30-day sprint

- Human synthesis. No opaque LLM processing; transparent documentation of what people actually said

- Diverse voices. Artists, philosophers, educators, technologists, not just industry executives

- Local anchoring. Grounded in Vancouver, on unceded Coast Salish territories, in a real room with real people

Join the Conversation

The AI Ethical Futures Lab meets monthly at venues across Vancouver. The next session explores who owns what when machines learn from everything?.

Get involved:

- Join the AEFL WhatsApp group (request invite via BC + AI)

- Attend BC + AI Office Hours (Fridays, 12-1 PM PT)

- Submit your own response at peoplesaiconsultation.ca

Policy gets written whether we participate or not. The question is whether industry voices dominate alone, or community voices push for something better.

Thirty days wasn’t enough. An industry-dominated task force wasn’t representative. Opaque LLM processing wasn’t trustworthy.

The People’s AI Consultation exists because civil society refused to accept that as “public engagement.”

Technology isn’t neutral, and neither are we.

About the AI Ethical Futures Lab

AEFL is a special interest group within the BC + AI Ecosystem focused on humanity’s goals, AI alignment, and building constructive conversations about where we want AI to take us. Founded in early 2026, the lab brings together ethicists, technologists, artists, educators, and concerned citizens for monthly dialogues on AI governance and policy.

About BC + AI

The BC + AI Ecosystem Association is a nonprofit building community-driven AI spaces across British Columbia. Through meetups, workshops, certification programs, and advocacy, BC + AI ensures that British Columbians have voice in the AI revolution.

Written by Kris Krüg | March 2026 | Vancouver, BC

Want to host an AEFL session in your community? Contact [email protected]