AI & Real-Time Content Creation: Behind the Scenes with Kevin Friel

Summary

ONE SENTENCE SUMMARY:

AWS Community Day Vancouver showcased innovative AI-driven content creation tools, enhancing interviews and artistic performances with real-time generative technology.

MAIN POINTS:

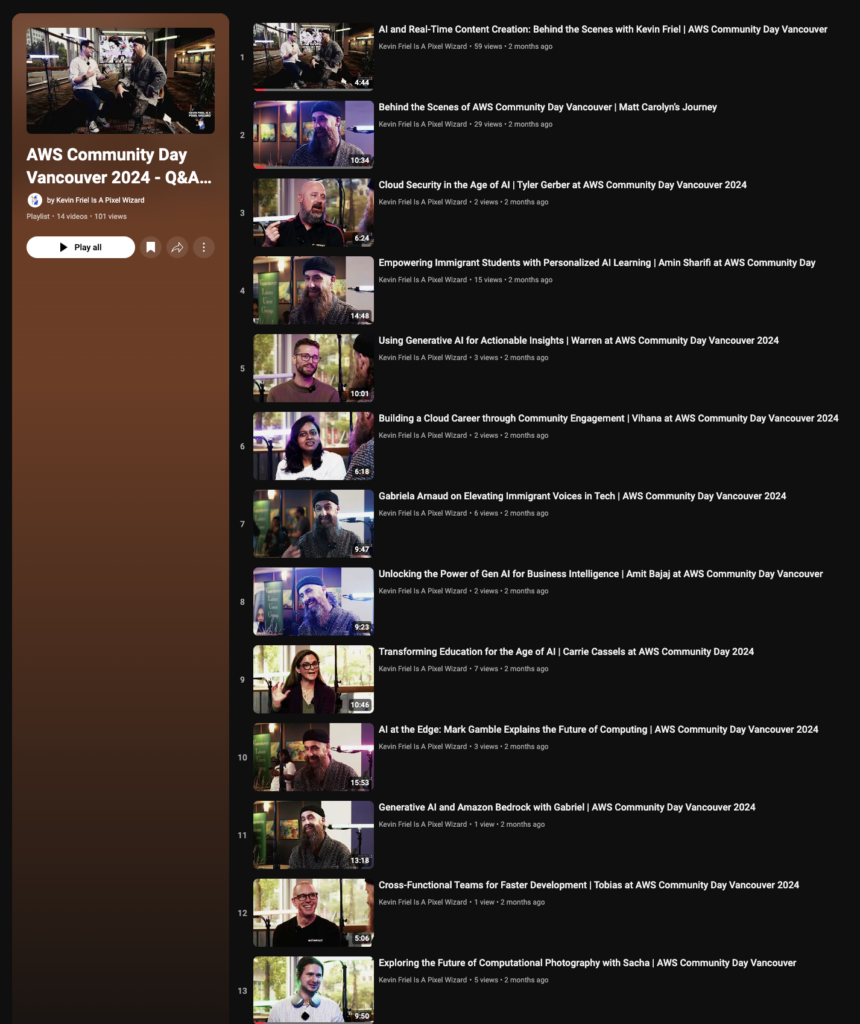

- AWS Community Day Vancouver featured a Gen AI Q&A Vibe Lounge.

- Chris Crew and Kevin Friel hosted the event, discussing AI-driven production setups.

- A three-camera real-time cutting setup with isolated camera recordings was used.

- AI-generated lighting and stable diffusion animation systems enhanced the event’s ambiance.

- Personalized generative backgrounds were created for each speaker using AI.

- Real-time prompt changes influenced background graphics during interviews.

- The system was also tested with dancers, artists, and beatboxers.

- High-end, real-time generated videos for social media were emphasized.

- Kevin Friel, aka Mr. Pixel Wizard, highlighted empowering artists with AI tools.

- The collaboration aims to produce scalable and innovative content efficiently.

TAKEAWAYS:

- AI technology can significantly enhance event production and audience engagement.

- Personalized generative content offers a unique and immersive experience.

- Real-time AI systems can adapt content dynamically during live events.

- Artists can benefit from AI-driven tools to create high-quality social media content.

- Collaboration and innovation in AI can lead to scalable content creation solutions.

Transcript

AWS Community Day Vancouver: Gen AI Q&A Vibe Lounge

Hosted by Kris Krüg and Kevin Friel

Kris Krüg: AWS Community Day Vancouver presents the Gen AI Q&A Vibe Lounge hosted by yours truly, Kris Krüg. Oh my God, is this the real elusive Mr. Pixel Wizard? The hunter becomes the hunted! The man behind the curtain, there you are.

Kevin Friel: Haha, hey, what’s up internet! I’ve got Kevin Friel here. We just wrapped our day at AWS Community Days and wanted to share some production notes and thank-yous. Kevin, tell us about what you built here today. What are we sitting in and looking at?

Kevin Friel: Yo, we built Noah’s Arc in a day, my friend! We’ve got a three-camera real-time cutting setup with isolated cameras also recording individually as a safety measure, capturing all that Big Data goodness. We’re using amazing film lights from Nanlite and these tubes around us are borrowed from the world-famous teleporter!

Kris Krüg: Ah yes, the AI-generated Pixel Wizard teleporter… That’s our AI-generated lighting cues in real-time with music. We also have AI generation happening in the background with a real-time stable diffusion animation system overlaying graphics. Be careful what you say around talented people; they might go home, stay up all night, and come back with a comfy UI flux stable diffusion generative background system that’s DMX compatible!

Kevin Friel: Exactly! We’ve built a whole ecosystem thanks to other talented contributors who created these products, allowing us to create this vibe pit that has really elevated the interviews. You’ve done an amazing job making people comfortable to speak their truths, values, and tech in this wild, wacky space.

Kris Krüg: Thanks, man. I love people, and empowering them through technology is a belief of mine. You make it easy and you make me look good. These backgrounds you created had our producer Gabriela interview all the speakers to generate keywords, resulting in generative backgrounds based on the speakers’ favorite music, places, and foods.

Kevin Friel: Yep. We’re also changing these prompts in real-time. When I hear keywords that could make interesting graphics, I type them in. That’s the next background coming up.

Kris Krüg: That’s fascinating. We should have a cue where the screen flashes when a background is created by something said during the talk—just a little pop of creativity.

Kevin Friel: Yeah, that would be cool. We’ve also been experimenting with dancers, artists, and beatboxers in these environments. This setup is versatile for those creative performances.

Kris Krüg: Absolutely. It’s an exciting time to produce amazing content, and the key is scalability. We can create vibe content at a scale we’ve never seen before, empowering artists with this system. It’s nice for you and me to relax with a beer, knowing robots are editing our videos back home!

Kevin Friel: Go robots, go! Check me out at kevinfriel.com and on all socials as Mr. Pixel Wizard.

Kris Krüg: Thanks a lot, Kevin. I appreciate your time and creative vision. Can’t wait to see what we come up with next!

Kevin Friel: Thank you, Kris. Always a hoot with you! That’s a wrap. Cut!

Outline

AI-Driven Content Creation at AWS Community Day Vancouver: Rethinking Real-Time Interaction

Hook:

At AWS Community Day Vancouver, we didn’t just talk about AI—we embedded it into the event experience, exploring how generative tech can elevate live content creation. What happens when artists and technologists come together with adaptive AI tools? We create a space where visuals and interviews reflect each person’s story in real time. Here’s a look at how we made that happen and what it could mean for the future of live production.

1. The Concept: Making AI a Creative Partner

- AWS Community Day Vancouver set the stage for a practical experiment with AI-powered production tools. This wasn’t about flashy tech; it was about seeing how AI could support authentic storytelling in real time.

- My goal was to humanize the technology by focusing on adaptability and responsiveness—qualities often missing in static productions. Together with Kevin Friel, we aimed to create a “vibe lounge” where every visual and lighting change could adapt to the flow of the conversation.

2. Behind the Scenes: Real-Time Production Set-Up

- Three-camera setup with isolated recordings allowed us to capture multiple angles, each with an eye for preserving spontaneity and depth. Every camera, including live cuts and isolated captures, fed data that could be later processed and repurposed for content.

- The AI-driven lighting system brought a new layer of nuance, syncing in real time with music and mood changes in the space. We used this to create a fluid experience, almost like the visual equivalent of adaptive music in video games, where every beat of the interaction subtly changed the space.

3. AI-Generated Backgrounds: Adaptive Visuals with Intent

- To make each speaker’s experience unique, we used AI-generated backgrounds that pulled from pre-discussed personal preferences—favorite places, colors, and sounds—capturing something meaningful about each individual.

- What stood out was the real-time prompt adjustment during interviews, where I could update background visuals based on the keywords and tone shifts in the conversation. This allowed for an ongoing, organic connection between what was being said and what was being seen, almost like an interactive layer over traditional interview footage.

4. Testing the Setup with Artists and Performers

- One of the most revealing moments came when we tested the system with dancers, artists, and beatboxers. The tech had to adapt to spontaneous, non-verbal expression—a powerful insight into how AI can support, rather than constrain, creative flow.

- This testing showed how AI could be a genuine partner in live performance, not just an overlay but an element that could respond to the performers’ rhythm and energy. It’s one thing for AI to react to spoken prompts, but it’s a whole other challenge for it to respond to a dancer’s movement or a beatboxer’s improvised rhythm.

5. Real-World Value: High-Quality Content, Low Resource Burn

- This setup also offered a more efficient way to create high-quality social content without the need for extensive post-production. With AI in the mix, our real-time generated videos were ready to go live within minutes, optimized for social channels without sacrificing quality.

- For me, this wasn’t just about speed; it was about how AI could support sustainable content creation by reducing the time and resources typically needed for high-production-value outputs. This has real potential for artists and brands working with limited budgets but who still want impactful, professional results.

6. Beyond the Hype: Implications for the Future of Creative Spaces

- This experience confirmed something I’ve been thinking a lot about: AI has the potential to bridge the gap between large-scale production and DIY culture, giving creators tools that adapt to their needs rather than the other way around.

- The adaptability we achieved here hints at a future where creative spaces can be as dynamic as the people in them. Imagine a world where every live event has an AI toolkit that shifts as the conversation unfolds, where venues and stages are no longer static backdrops but living extensions of each artist’s vision.

Conclusion: Realizing AI’s Potential as a Creative Partner

- AI is often framed as a replacement for human creativity, but what we saw at AWS Community Day shows a different potential: AI as a creative partner, an element of responsiveness in real-time settings that can bring authenticity and depth to live productions.

- With the right tools and intent, adaptive AI can help artists create high-quality content in a way that feels less produced and more authentic. It’s about putting AI in a supporting role, letting it adapt to the artist’s voice rather than overshadow it.

Key Takeaways:

- Personalized, real-time adaptation: AI-driven content tools bring a new layer of personalization that shifts dynamically with each artist.

- Scalable and efficient content creation: By reducing resource demands, AI offers a sustainable way forward for creators who need quality without excessive cost.

- AI as a collaborative tool: This experience underscored that AI’s real promise lies in supporting, not replacing, human creativity.

Final Note: Reimagining the Role of Technology in Art

- This isn’t about swapping artists for algorithms; it’s about creating systems that can flow with creative energy, adaptable and open to the unexpected. It’s a small but essential shift in how we think about the relationship between tech and creativity, and it’s just the beginning.

Socials

KK

- “At AWS Community Day Vancouver, we didn’t just showcase AI; we put it to work in real time. Every conversation, every beat became a part of a living, breathing visual experience. This is what the future of creativity looks like—adaptive, immersive, and personalized.”

- “In our Gen AI Q&A Vibe Lounge, every speaker saw their own story reflected in real-time visuals. Personalized generative art isn’t just tech—it’s a new medium for storytelling that evolves with the people it represents.”

- “We pushed the limits at AWS Community Day with AI-driven backgrounds that adapted to each speaker’s words. No static screens, no one-size-fits-all. Just visuals that captured the energy of every conversation. AI isn’t here to replace creativity; it’s here to amplify it.”

- “AI + real-time production = an interview space like you’ve never seen. Imagine AI-crafted backgrounds and dynamic lighting that shift and flow with each guest’s vibe. That’s what we brought to life in Vancouver. Welcome to adaptive content creation.”

- “At the intersection of tech and art, we’re building a future where visuals respond to the pulse of a room. Watching AI-generated visuals adapt to each speaker’s words was a reminder: tech can be a partner in creativity, not just a tool.”

Kevin

- “Imagine an interview space where every word matters. At AWS Community Day Vancouver, our setup used generative AI to adjust backgrounds based on each guest’s story, creating a dynamic experience that was truly one of a kind.”

- “Our three-camera setup and AI-powered lighting weren’t just technical choices—they were part of building an adaptive environment where each conversation could shape its own atmosphere. AI can make real-time storytelling feel organic.”

- “Creating custom, AI-driven visuals for each speaker was about giving their stories a unique visual language. At AWS Community Day, we took the idea of personalized content and scaled it in a way that truly captured each guest’s vibe.”

- “Experimenting with AI-driven setups for dancers and performers made it clear: tech that adapts in real time has the power to elevate spontaneity rather than restrain it. AI tools in a live setting offer a new kind of responsiveness.”

- “We’re entering a new phase of production—AI-driven visuals, real-time adaptability, and scalable, high-quality content. Working alongside Kris Krüg to bring this setup to AWS Community Day felt like the beginning of something much bigger.”

“We built Noah’s Arc in a day, my friend!” Kevin Friel tells me with a grin that suggests he knows exactly how wild that sounds. We’re standing in Mister Pixel Wizard’s AI Telelporter setup at AWS Community Day Vancouver, surrounded by glowing Nanlite tubes and a three-camera array that looks like it was teleported in from next Thursday.

If you told me a year ago that one of Dune’s VFX coordinators would be revolutionizing indie production from a corner of Vancouver’s tech scene, I might have been skeptical. But watching Kevin work his magic with this system, it all makes perfect sense. He’s taken everything he learned in the Hollywood machine and remixed it into something entirely new.

They call him the Pixel Wizard, and at first I thought it was just another tech scene nickname. But spend five minutes watching him orchestrate this setup – lights dancing in perfect sync with audio, backgrounds morphing based on conversation keywords, cameras capturing every angle while AI weaves it all together – and you realize it’s not clever branding. It’s prophecy.

kevin friel is a pixel wizard

“Check this out,” he says, typing a quick command that transforms the entire space into what looks like the inside of a neural network. The lights pulse with an intelligence that feels almost alive. This production setup – is a glimpse into the future of creative technology.

Kevin didn’t set out to build a revolution. He just kept asking “what if?”

What if we could give indie creators access to Hollywood-grade production value?

What if we could make environments that respond to human creativity in real-time? What if we could build systems that amplify rather than replace human artistry?

Those questions led him from the structured world of big-budget VFX into uncharted territory. “Most people think you need millions of dollars and a whole crew to create cinematic content,” he tells me, adjusting a virtual slider that makes the lights dance. “We’re proving you just need the right tools and a little bit of magic.”

The Modern-Day Merlin’s Lab

Step into Kevin Friel’s Vancouver voltron den and you’ll find yourself at the beating heart of a creative revolution. Amidst a labyrinth of Nanlite tubes and more cameras than a Tarantino flick, the dude they call the Pixel Wizard is busy rewiring reality one photon at a time.

See, Kevin and I are collaborators, co-conspirators… brothers-in-arms on a quest to hack the ever-loving shit out of the media landscape. It’s a mind-meld of epic proportions – Kev’s galaxy brain technical chops and my hurricane of ideas and community building. We’ve been deep in the trenches together, cooking up some straight-up sorcery.

“Kris and I met at a pivotal moment,” Kev tells me between virtual production sprints. “I was diving deep into AI and needed someone who could not only understand the tech but push it to the absolute brink. Kris was that trigger – a visionary madman who could see past the pixels.”

I can’t help but grin. Damn right I could. When we started jamming, it was like we’d found the missing code to each other’s motherboards. Our ideas crackled and sparked, igniting a wildfire of possibility.

Cinematic Podcasts & Videoblogs

One of our most balls-to-the-wall projects is Mister Pixel Wizard’s Generative AI Teleporter, an AI-juiced, real-time cinematic lighting setup that’s been blowing minds left and right. This is a sentient beast of a system that syncs lights with audio like some kind of cyberpunk dreamscape and understands what podcasters are talking about and adjust backgrounds and visuals accordingly.

“Everyone’s gotta churn out content like a sweatshop these days,” I say, hacking into the mainframe. “We wanted to build a tool that not only made that grind easier but shot it full of artistic steroids.” And hoo boy, does it deliver. Wire up a musician and watch as the lights pulse and throb to every beat, painting the room in electric hues. Strap in a dancer and marvel as the environment bends and sways to their every move, like the whole fucking universe is their canvas.

Creatives Inside the Teleporter

But the tech is only half the equation. The real key to this whole mad science experiment? The community, baby. Through Future Proof Creatives and our Vancouver AI Community Meetups, Kev and I have been stitching together a patchwork of artists, technonauts, and straight-up dreamers. We’re building a scene while swapping ideas and setting the stage for a neon-soaked mutiny on the stagnant hulk of old media.

“Vancouver’s a powder keg of creative energy,” I say, watching as a dancer paints the air in impossible geometries. “Let’s light the fuse and watch the fireworks… ;)”

And that’s exactly what we did at AWS Community Day Vancouver 2024. We assembled our showcase AI tools and creative workflows and we unleashed it like a pack of starving wolves. Every speaker became a part of the our radical matrix, their words and vibes translated into living, breathing datastreams.

“Imagine an interview space where every word matters,” Kevin says. “Our setup used generative AI to adjust backgrounds based on each guest’s story, creating a dynamic experience that was truly one of a kind.”

“We showcased AI by putting it to work in real time. Every conversation became part of a living, breathing visual experience. This is what the future of creativity looks like—adaptive, immersive, and personalized.”

Democratizing the Means of Creation

But we’re not just here to make pretty pictures. We’re here to storm the gates of the creative citadel and hand out the keys to the kingdom. “Every artist is their own startup now,” I say, hammering out grant proposals between sips of neon beer. “They need a constant IV drip of high-grade content to stay in the game. That’s where we come in.”

With our “Content as a Service” model, we’re turning high-end production from a walled garden into an open-source playground. Pop-up installations, subscription-based setups – we’re making Hollywood-grade magic accessible to anyone with a story to tell.

This is about blasting open the airlock and letting the creativity breathe.

The Shepherds of a New Era

When Kevin dropped the trailer for Shepherds at our November meetup, it was like watching a digital prophecy unfold. Born in the crucible of pandemic isolation—those endless months when reality felt more surreal than fiction— it was Kevin’s fever dream of humanity pressed against the bleeding edge of possibility, finally clawing its way into existence through pure technological sorcery.

“Working on Shepherds was an incredible journey,” Kevin reflects. “It was about pushing the limits of what AI can do in film production. But it was also about the conversations we had—the philosophical debates about where technology is taking us.”

Over months of digital alchemy, Kev wrestled an arsenal of AI tools into submission, transforming those haunting visions from phantom whispers into raw, pulsing pixels this was straight-up technological necromancy.

The creative pipeline for Shepherds reads like a cyberpunk mediamakers wet dream. A custom GPT model dissecting scripts like a surgical AI. Touch Designer system engineering visual prompts with the precision of a quantum computer. Magnific upscaling images like they’re being beamed in from another dimension. RunwayML Gen3 Alpha Turbo animating characters and cameras with an almost sentient fluidity.

Two AI-generated tracks mutated into a soundscape via Udio, then alchemized into a full score. The whole mad science experiment crystallized in DaVinci Resolve 19, where Kevin performed his final surgical strikes of cut, color, and mix. Maya Bruck’s titles adding that crucial human fingerprint—proof that we’re not just passengers, but co-pilots in this wild ride.

The Road to Valhalla

But beneath all the techno-sorcery and grandiose vision, there’s something far more vital pulsing at the heart of our operation: a friendship forged in the crucible of innovation.

Kevin is one of my inspiring co-pilots in this mad dash to the future. He’s my brother from another motherboard, a kindred spirit in the quest for the next paradigm shift. Our brainstorms crackle with an energy that borders on the supernatural, ideas ricocheting off each other like particles in a hadron collider.

As I sit here watching Kevin conjure miracles out of thin air, I can’t help but feel like we’re on the cusp of something seismic. We’ve got partnerships brewing with the biggest names in tech, plans to storm stages from Vancouver to Valhalla. But no matter how high we climb or how far we roam, one truth stays carved in stone: Kevin Friel and Kris Krug are in this game for the long haul, and we’re playing for keeps.

So strap in, plug in, and hold on to your frontal lobes. The Pixel Wizard and the Visionary are just getting started, and the future’s looking bright enough to sear your eyeballs.

The old world’s living on borrowed time. The new one? It’s ours for the taking. See you on the other side of the event horizon, comrades. The revolution won’t be televised – it’ll be teleported. ??

AI Teleporter AI-Driven Lighting and Generative Background System Technical Overview

PixelWizards’ AI Teleporter is an innovative AI-driven system that synchronizes lighting effects and generates real-time visual backgrounds in harmony with music and performance art. Developed by Kevin Friel, known as Mr. Pixel Wizard, in collaboration with Kris Krüg of Future Proof Creatives and the Vancouver AI community, this technology combines advanced AI algorithms with professional lighting and generative visuals to create dynamic, immersive experiences.

Key Features

- Real-Time Audio Synchronization: Instantaneous adjustment of lighting and visuals based on live audio inputs.

- AI-Assisted Mood and Visual Selection: Utilizes AI to select appropriate moods, color schemes, and background visuals, enhancing emotional impact.

- Plug-and-Play Functionality: Easy setup with minimal technical preparation required.

- Flexible Control During Performance: On-the-fly changes to moods, color schemes, and background visuals.

- Customizable Effects: Granular control over individual or grouped lights and visual elements.

- Multi-Channel Audio Analysis: Supports detailed analysis for nuanced synchronization.

Technology Stack

Lighting Equipment:

- Nanlite Pavotube 15XR lights

- Nanlite 30XR light

- Nanlite Forza 60C light

Control System:

- Maestro DMX AI lighting control system

Backend Processing:

- ComfyUI and Stable Diffusion for AI-driven control algorithms and real-time generative backgrounds

Real-Time Generative Backgrounds with ComfyUI and Stable Diffusion

An integral component of the Teleporter system is the use of ComfyUI and Stable Diffusion models to generate real-time visual backgrounds. This feature enhances the immersive experience by providing dynamic, AI-generated visuals that complement the performance and lighting effects.

Features:

- Real-Time Generation: Produces high-quality backgrounds on-the-fly, reacting to live audio and performance cues.

- Customizable Prompts: Allows input of text prompts, images, or parameters to influence the style and content of the backgrounds.

- Seamless Integration: Synchronizes generative visuals with the AI-driven lighting system for a cohesive experience.

- Low Latency Processing: Optimized algorithms ensure minimal delay, keeping visuals in sync with performances.

- Versatile Styles: Supports a wide range of visual styles—from abstract and artistic to realistic environments.

Technical Implementation:

- ComfyUI Interface: Acts as the user interface and workflow manager for configuring Stable Diffusion models and settings.

- Stable Diffusion Models: Leverages advanced diffusion models for image generation, capable of producing high-resolution visuals.

- Hardware Acceleration: Utilizes GPU processing for efficient real-time generation.

- Pipeline Integration: Generative backgrounds are integrated into the video output, allowing for live compositing with performers.

Applications:

- Live Performances: Enhances stages with dynamic, responsive backgrounds that adapt to the music and performance.

- Music Videos: Provides unique, AI-generated backdrops without extensive post-production.

- Virtual Events and Webinars: Elevates online presentations with engaging and interactive visuals.

- Interactive Art Installations: Creates environments where visuals respond to audience interactions or environmental inputs.

- Broadcasting and Streaming: Offers streamers dynamic backgrounds, enhancing viewer engagement.

Benefits:

- Enhanced Immersion: Combines lighting and visuals for a fully immersive audience experience.

- Creative Control: Artists can tailor both lighting and backgrounds to fit their vision.

- Cost-Effective Production: Reduces the need for physical sets and extensive VFX work.

- Increased Engagement: Unique visuals capture audience attention and enhance the performance’s appeal.

Unique Value Proposition with Generative Backgrounds:

The integration of real-time generative backgrounds sets the Teleporter system apart by offering a comprehensive visual solution. This not only enhances the performance space but also provides artists with a powerful tool for storytelling and brand building.

Applications

- Performance-Based Music Videos: Deliver visually stunning content for platforms like TikTok and Instagram.

- Live Concerts and Events: Transform venues with synchronized lighting and dynamic backgrounds.

- High-Concept Product Reveals: Add impactful visuals to product launches and promotional events.

- Hybrid Virtual Productions: Integrate with virtual sets for film and media projects.

- Gaming and eSports Streaming Setups: Create immersive environments for live streams.

- Podcasting and Multi-Camera Setups: Elevate visual appeal for podcasts and talk shows.

- Corporate Presentations and Keynotes: Enhance professional presentations with dynamic visuals.

- Interactive Art Installations: Offer artists tools for creating responsive, engaging installations.

Business Model: Content as a Service (CaaS)

Targeting high-volume content creators—musicians, dancers, spoken word artists, and speakers—the Teleporter provides unique, standout content without extensive technical setup or high upfront costs.

Service Tiers

- Pop-Up Installations: Short-term setups for events or intensive content creation sessions.

- Subscription Model: Regular access to the system for ongoing content needs.

- One-Time Sessions: Individual sessions modeled after services like Michelle Diamond’s “Tiny Sessions.”

Additional Services

- AI-Enhanced Post-Processing: Advanced editing with AI-driven effects and enhancements.

- Live Streaming Support: Technical assistance for broadcasting live performances with integrated visuals.

- Grant Application Assistance: Aid in securing funding through grants.

- Training Workshops: Education on maximizing the system’s capabilities.

- Consulting for Permanent Installations: Guidance on integrating technology into fixed venues.

Unique Value Propositions

- Psychological Performance Enhancement: Dynamic visuals boost performer engagement and energy.

- Turnkey Visual Solution: Offers a comprehensive system for high-quality, multi-channel content creation.

- AI-Driven Creativity Boost: Empowers artists with cutting-edge tools to expand their creative horizons.

- Scalability: Suitable for individual creators to large-scale events and productions.

- Integration with Emerging Technologies: Compatible with advancements like AI avatars and digital cloning.

Partnerships and Integrations

- Music Software Companies: Potential DAW integration for seamless control.

- Virtual Production Providers: Collaborations for enhanced virtual environments.

- Live Streaming Platforms: Partnerships to optimize streaming capabilities with integrated visuals.

- Event Organizers: Engagements with entities like MUTEK and South by Southwest.

- Grant-Giving Organizations: Work with FACTOR, Creative BC, etc., to support artists.

- AI Technology Companies: Collaborations to advance system capabilities.

R&D Roadmap

- User-Friendly Interface Development: Simplify controls for broader accessibility.

- Plug-and-Play Kits: Create kits tailored for various use cases.

- Wireless Control Integration: Explore CRMX (wireless DMX) for flexible setups.

- Enhanced AI Capabilities: Expand functionalities in generative visuals and post-processing.

- Custom Hardware Development: Design lighting fixtures optimized for AI control.

- Virtual Production Integration: Seamless incorporation into virtual sets and environments.

Cinematic Podcasts: Hollywood-Grade Storytelling Meets Generative AI

URL: https://youtu.be/m8Pbij-M55g?si=fijEwTwwBqL79IZA